Chris Wahl has a good writeup demystifying many LACP myths here.

It should be noted that LACP, despite popular belief, does not make the network load distribution any different or more optimized. This prevents lots of potential network disturbances and makes it much more easy to verify link aggregation configuration. This is the great feature of LACP, meaning that links will not get up in running order if the other side is not a LACP partner. With the command “ show lacp” we could see that the physical switch has successfully found LACP partners on both interfaces and that the link is now up and running in good order. However, with LACP we could see if the setup of link aggregation group was completed or not. In ordinary static trunk/etherchannel we can not really know if the links are correctly configured and cables properly patched, which is often responsible for a number of network issues. Now we get to the real advantage of the dynamic LACP protocol: we could verify the result. The default mode on HP LACP is active, which means that the switch will now start negotiating LACP with the other side, here our ESXi 5.1 host. (“ Port Channel” or “ Etherchannel” on Cisco devices.) The command above creates a logical port named Trk1 from the physical ports A13 and A14 using LACP.

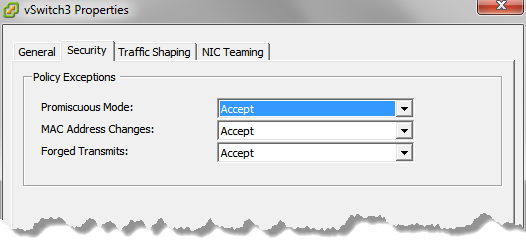

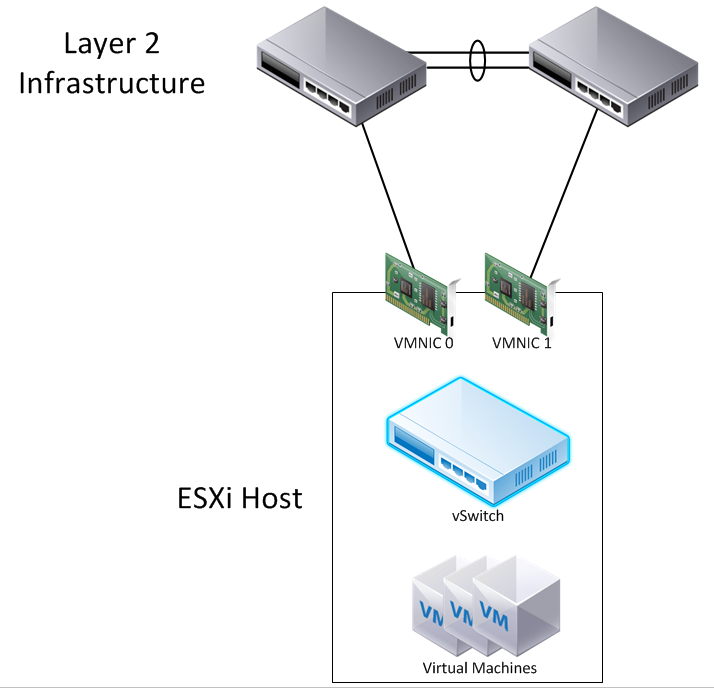

In the HP command line interface a collection of switch port in a Link Aggregation Group is called “ Trunk“. As displayed earlier we could use the LLDP feature to find the actual switchports connected to the ESXi host, in this example the ports are called A13 and A14. In this example we have a HP Switch (Procurve 5406-zl) and the commands shown here will work on all HP switches (excluding the A-series). We must now complete the setup on the physical switch. The LACP setting is only configured on the Uplink-portgroup. This means that the physical switch must start the LACP negotiation.Īll other portgroups on the Distributed vSwitch must be set to “ IP Hash“, just as in earlier releases. Passive means in this context that the ESXi vmnics should remain silent and do not send any LACP BPDU frames unless something on the other side initiates the LACP session. We can now select either default “passive” or “active” mode. Change from default Disabled to Enabled to active LACP. Note that you must use the Web Client to reach this setting. In the Uplink portgroup settings we have the new option of enabling LACP. This information is needed soon, but we must first setup the LACP connection on the ESXi host. Above we can see that vmnic2 and vmnic3 from the ESXi host is connected to certain physical switch ports. Now it is easy to verify the exact ports on the physical switch attached to the specific ESXi host. Above is the new configuration box on the Web Client of vSphere 5.1. This could either be a new Distributed vSwitch or an already existing 4.0, 4.1 or 5.0 switch that is upgraded to version 5.1.Įven if it is not needed technically for LACP, it is always good to enable LLDP to make the configuration on the physical switch simpler. To use dynamic Link Aggregation setup you must have ESXi 5.1 (or later) together with virtual Distributed Switches version 5.1 (or later). On the earlier releases of ESXi the virtual switch side must have the NIC Teaming Policy set to “ IP Hash” and on the physical switch the attached ports must be set to a static Link Aggregation group, called “ Etherchannel mode on” in Cisco and “ Trunk in HP Trunk mode” on HP devices. Some common configuration errors did however this kind of setup somewhat risky, but will now be easier in ESXi 5.x. To be able to combine several NICs into one logical interface correct configuration is needed both on the vSwitches and on the physical switches. With vSphere 5.1 and later we have the possibility of using LACP to form a Link Aggregation team with physical switches, which has some advantages over the ordinary static method used earlier. LACP – Link Aggregation Control Protocol is used to form dynamically Link Aggregation Groups between network devices and ESXi hosts.

NIC TEAMING VMWARE ESXI 6 HOW TO

How to configure and verify the new LACP NIC Teaming option in ESXi. LACP support is available on ESXi 5.1, 5.5 and 6.0.